Hello everyone, thanks for coming back to the last tutorial in the DATA TRANSFORMATION category of the HDPCD certification. We are going to pick-off things from the last tutorial, in which, we saw how to define an ALIAS to a function present in the JAR file. In this tutorial, we are going to see how to invoke/call that ALIAS to perform the desired operation.

Let us start then.

We are going to follow the process mentioned in the below infographics.

Please follow the below steps one by one.

- CREATING INPUT CSV FILE IN LOCAL FILE SYSTEM

We can create the input CSV file using the VI Editor which we have done numerous times in the past.

I have uploaded this input CSV file to my GitHub profile in HDPCD repository with name “37_input_UDF_invoke.csv“. You can download this file by clicking here and it looks something like this.

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| 1 | Richard | Hernandez | XXXXXXXXX | XXXXXXXXX | 6303 Heather Plaza | Brownsville | TX | 78521 | |

|---|---|---|---|---|---|---|---|---|---|

| 526 | Kimberly | Barrett | XXXXXXXXX | XXXXXXXXX | 7988 High Jetty | Brownsville | TX | 78521 |

You can use the following commands to create this input CSV file in local file system.

vi post27.csv

############################

PASTE THE COPIED CONTENTS HERE

############################

cat post27.csv

The following screenshot shows the execution of the above commands.

As the input CSV file is ready in the local file system, it is time to push it into HDFS.

- PUSHING INPUT CSV FILE FROM LOCAL FILE SYSTEM TO HDFS

You can use the following commands to push this file from the local file system to HDFS.

hadoop fs -mkdir /hdpcd/input/post27

hadoop fs -put post27.csv /hdpcd/input/post27

hadoop fs -cat /hdpcd/input/post27/post27.csv

The output of the above commands is shown in the following screenshot.

Above screenshot confirms that the post27.csv file was successfully created to the HDFS directory /hdpcd/input/post27.

Let us start working on the pig script creation now.

- CREATING PIG SCRIPT TO INVOKE UDF

The objective of this pig script is to invoke the UDF present in one of the JAR files. Since we have to invoke the UDF, we first need to register the jar file. Define an ALIAS for the function and then invoke that ALIAS.

I have uploaded this input CSV file to my GitHub profile in HDPCD repository with name “38_UDF_invocation.pig“. You can download this file by clicking here and it looks something like this.

This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| — this pig script demonstrates how to invoke an UDF in Apache PIG | |

| — LOAD command is used to load data from post27.csv into input_data pig relation | |

| input_data = LOAD '/hdpcd/input/post27/post27.csv' USING PigStorage(','); | |

| — we need to register the jar file first with REGISTER command | |

| REGISTER /usr/hdp/2.3.0.0-2557/pig/piggybank.jar; | |

| — defining an alias for the fully qualified class name UPPER | |

| DEFINE upper org.apache.pig.piggybank.evaluation.string.UPPER; | |

| — invoking upper ALIAS for UPPER() function in piggybank.jar file | |

| — only first name and last name is extracted from this input file into upper_data pig relation | |

| upper_data = FOREACH input_data GENERATE upper($1) as fname, upper($2) as lname; | |

| — STORE command is used to store upper_data in HDFS directory /hdpcd/output/post27 | |

| STORE upper_data INTO '/hdpcd/output/post27' USING PigStorage(' '); |

Let us go through this pig script one command at a time.

input_data = LOAD ‘/hdpcd/input/post27/post27.csv’ USING PigStorage(‘,’);

LOAD command loads the data stored in post27.csv file into a pig relation called input_data.

REGISTER /usr/hdp/2.3.0.0-2557/pig/piggybank.jar;

REGISTER command is used for registering the piggybank.jar file to the pig session.

DEFINE upper org.apache.pig.piggybank.evaluation.string.UPPER;

DEFINE command is used for defining an ALIAS for the fully qualified class name present in the REGISTERed jar file.

upper_data = FOREACH input_data GENERATE upper($1) as fname, upper($2) as lname;

The above command is used for invoking the ALIAS “upper” on columns column number 2 and 3 (index $1 and $2).

Now, let us create this pig script with the help of VI editor.

vi post27.pig

############################

PASTE THE COPIED CONTENTS HERE

############################

cat post27.pig

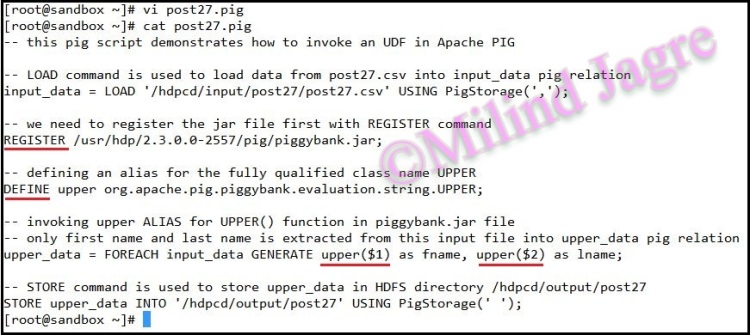

You can use the following screenshot as the reference for creating this pig script.

The above screenshot confirms that the pig script was created successfully.

Let us run this pig script now.

- RUN PIG SCRIPT

We can use the following command to run this pig script.

pig post27.pig

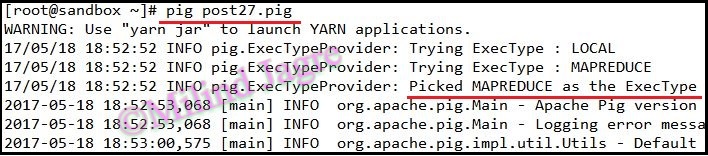

The execution of this pig script looks as follows.

And the output of this pig script looks like this.

As you can see from the above screenshot, pig script was successful in execution. A total of 2 records were read from /hdpcd/input/post27 directory and 2 records were stored in /hdpcd/output/post27 directory.

It shows that there is no record/data loss, but we need to make sure that upper ALIAS worked perfectly and we got the expected output.

Let us log into HDFS for cross-checking the output records.

- CHECK OUTPUT HDFS DIRECTORY

You can use the following commands to check the contents of the output HDFS directory.

hadoop fs -ls /hdpcd/output/post27

hadoop fs -cat /hdpcd/output/post27/part-m-00000

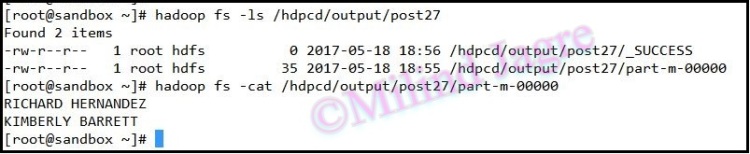

The execution of the above commands looks as follows.

As you can see from the above screenshot, both the first name and last name were printed in the UPPERCASE LETTERS which was the expected output of the upper ALIAS defined in the pig script.

This concludes the tutorial here. And with this tutorial, I am happy to announce that the DATA TRANSFORMATION section of the HDPCD certification is over and from the next tutorial onwards, we are going to start off with DATA ANALYSIS section, which is the last one in the HDPCD certification series.

I hope the contents are making sense and you are getting what I want to convey.

You can check out my website at www.milindjagre.com

You can check out my LinkedIn profile here. Please like my Facebook page here. Follow me on Twitter here and subscribe to my YouTube channel here for the video tutorials.

See you soon.

Cheers!

One thought on “Post 27 | HDPCD | Invoke a User Defined Function in Apache Pig”